From Chatbot to Community Action: Lessons in Rapid Sensemaking in Crisis-Affected Contexts

Case study prepared by: Nicolas Chehade and Victoria Stanski

I. Introduction & Context

In early 2026, Common Good AI partnered with Social Innovation Hub (SHiFT) and the Municipality of Tripoli in Lebanon, with funding from the International Organization for Migration (IOM), to launch a pilot project to test a more dynamic approach to civic engagement. Instead of a static survey, residents shared priorities in real time through a WhatsApp chatbot (via Twilio), with AI-powered analysis in Talk to the City (T3C) to synthesize community infrastructure needs across ten neighborhoods.

The digital tool supported both text and voice notes, helping reduce literacy and accessibility barriers, and leveraged WhatsApp’s widespread use in Lebanon to boost participation. The pilot coincided with a major building collapse in the project area that killed 15 people, making the focus on infrastructure urgent and deeply resonant for residents.

II. Problem Statement

The core challenge was to move beyond institutional assumptions. SHiFT required a mechanism to:

Validate Community Priorities Needs: Test if established priorities–waste, lighting, education–matched the urgent, personal needs of residents.

Mobilize Local Communities to Bridge with the Diaspora: Confirm if residents would participate in the neighborhood project implementation and act as bridges between local projects and financial support from the diaspora.

Trauma-Informed Outreach: Engage a community experiencing systemic community infrastructure collapse without causing further distress.

III. Process

The project’s deployment included the following steps:

The project was built using Twilio for WhatsApp delivery

Community Needs Assessment: SHiFT engaged small resident groups for in-person consultations to identify needs in their neighbourhood, which were validated with Tripoli Municipality authorities. Using these initial priorities, the chatbot was used to ask a wider circle of residents if these identified community needs aligned with their immediate needs.

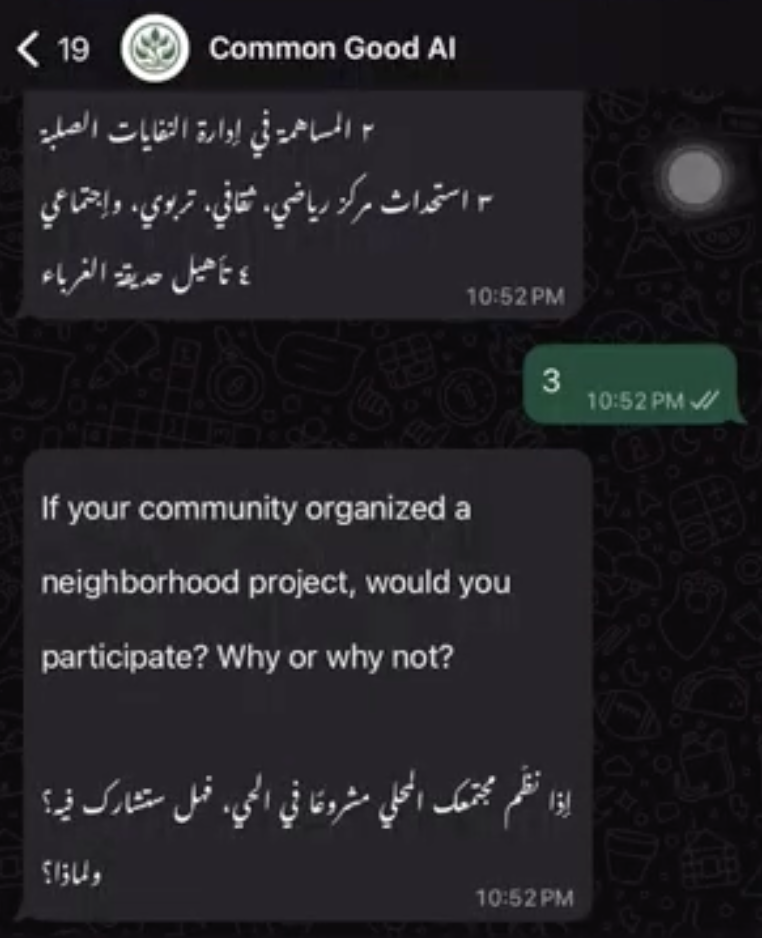

Technical Infrastructure: The project was built using Twilio for WhatsApp delivery. It works like an automated text conversation: when someone sends a message, the system reads it, selects the right response based on pre-set answers or AI, and replies instantly within the same chat. It creates an experience that feels like texting with a responsive assistant inside a familiar messaging app. See the chatbot demonstration here.

Anonymity, Multimodal Consent & Questions: To ensure the process was inclusive, the chatbot operated on a multimodal basis; residents could respond via text or voice note, thus lowering literacy barriers. The process began with a strict opt-in and participants typed "yes" to confirm consent. They were informed that the survey would be confidential and they could stop at any time.

Bottleneck Survey Logic: The chatbot messages were in English and Arabic. Basic demographic information was collected (location, age, gender, and nationality), then the 8-question script was engineered to move from broad neighborhood concerns to specific, personal impacts. After gathering open-ended "challenges," the chatbot asked users to select first and second priorities from SHiFT’s planned projects (e.g., waste vs. solar lighting). This validated if institutional plans aligned with the community’s priorities.

Trauma-Informed Notices: Before asking the personal impact question, the chatbot issued a specific content notice: "You don't need to mention painful or sensitive details." This gave users the agency to disclose as much or as little as they felt comfortable with.

Streamlining AI Synthesis & Human Audit: To preserve the integrity of the data, the post-data collection analysis was conducted using a single-language stream approach by maintaining separate English and Arabic datasets. T3C clustered thousands of responses into thematic maps to understand areas of agreement and disagreement.

Findings Inform Community Informational Discussions: With this data, SHIFT held informational events to discuss the top findings with each neighborhood to build support; they complemented the digital approach with in-person engagement.

IV. Results & Findings

Out 618 messages sent, we received responses from 127 individuals, with an overall response rate of 21%. The survey used closed questions (demographics, binary Yes/No, and ranked project lists) to establish where the consensus among community members lay across all ten neighborhoods (solar lighting and waste management). Simultaneously, open questions (neighborhood challenges, personal impact, and solution ideation) captured high-urgency details like sewage contamination and building collapse; this has helped the team to gather "lived experiences" as well.

Based on the responses, these were the identified infrastructure project priorities:

Table 1: Participation rates by neighborhood

| Neighborhood / حيّ | Messages Sent / الرسائل المرسلة | Response Rate / معدل الاستجابة | Project Selected / تم اختيار المشروع |

|---|---|---|---|

| 1-Zahriyye | 80 | 26% | Install solar lights + public safety cameras |

| 2-Nouri | 77 | 5% | Rehabilitate waste management network |

| 3-Tal | 97 | 12% | Install solar lights + public safety cameras |

| 4-Qobbeh | 84 | 29% | Install solar lights + public safety cameras |

| 5-Swaika | 52 | 17% | Install solar lights + public safety cameras |

| 6-Tebbeneh | 106 | 21% | Rehabilitate sewage network |

| 7-Abu Samra | 37 | 27% | Install solar lights + public safety cameras |

| 8-Jabal Mohsen | 39 | 54% | Rehabilitate central garden, soccer field, or waste water |

| 9-Hadid | 23 | 9% | Rehabilitate public spaces, or waste water |

| 10-Haddadin | 23 | 13% | Rehabilitate water or wastewater network |

The table below summarizes the high-level open-ended responses by theme. An important difference is how T3C aggregated the responses in Arabic and English, categorizing the claims in different topics and sub-topics. The summary reports are available here: Arabic and English.

Table 2: Priority themes across open ended questions

| Priority Theme | Arabic Responses | English Responses |

|---|---|---|

| Infrastructure | 66 people | 37 people |

| Environmental Cleanliness | 44 people | 41 people |

| Employment | 35 people | 36 people |

| Public Health & Safety | — | 38 people |

| Safety and Security | 21 people | — |

V. Lessons Learned & Recommendations

A Data and technology-specific learnings

Deploy Chatbot for Initial Sensemaking:We recommend to start with the chatbot to gather high-level community needs, this will help us get a wider set of priorities and have them inform in-person engagement. This is the top phase of the “deliberative funnel” that can lead the team into deeper and more granular priority exploration that will help with policy focus.

Factor Administrative Delays:Even when the code and survey were ready, the primary bottleneck was the Twilio and Meta approval. We recommend a wait time for the total approval process of at least 14 days. The main challenge was obtaining approval to send outbound messages, as both Twilio and Meta Platforms require clear, documented participant consent before messages can be delivered in order to prevent spam and protect users’ privacy. In this case, SHiFT had already collected participants’ contact information through a mobilization drive, meaning individuals had provided consent to participate in the project, which supported compliance with these requirements, but was not immediately recognized by the technology providers.

Single-Language Streamlining:For AI analysis engines in Talk to the City, mixing languages in one dataset might create "noise” and therefore confuse the analysis. Analyzing Arabic and English as separate streams preserves the integrity of local idioms and technical terms. Though, it does require review of both dataset by human project managers.

Localization vs. Translation & Auditing:While AI is a powerful aggregator in this instance, it did not catch nuanced urgent matters. A major lesson was that AI summaries often use "high-level" analysis or focus on the aggregate claims, this approach dilutes the imminence of certain insights or claims, and creates some form of discrepancy between the summary and the raw community text. We suggest auditing the AI output against raw respondent IDs to catch major safety outliers.

B. Community-level specific learnings

Increasing Community Engagement Through In-Person Outreach:For an increased engagement and community respondent participation, additional outreach could have been implemented, including flyers, door-to-door engagement, and social media. Additionally, the team hypothesizes that community members may not have completed the survey because it included English with Arabic. In the future suggestion, the survey could be entirely in Arabic with a more consistent "ground game" to reinforce and introduce use of new tech to foster participation.

Accessibility and Gatekeeping:Traditional community outreach and community surveying in high-density, crisis-affected urban areas, like Tripoli, often fails to capture the "quietest" voices. Chatbot powered community sensemaking can cover the following:

Accessibility: Helps in consulting residents who are homebound, disabled, or living in security-sensitive zones.

Gatekeeping: Bypassing local "influentials" who often filter community needs to suit political agendas.

Informing In-Person Engagement:The chatbot gathered the information and T3C analyzed the insights. The chatbot accelerated data collection within a one week span. We recommend that the identified insights and data clusters be reviewed for high-level analysis, and compare the disaggregated claims in case of urgent need that can inform specific service responses in the community (i.e., sanitation and health issues, etc.) Though it is essential that the host organization conducting the survey then have the capacity to respond or refer to relevant entities to address the issue, otherwise there is a risk of raised expectations by community members. Clear communication about the project scope is critical.

The "Power Mapping" Question:We recommend that organizations utilizing chatbots to survey community needs should identify "influentials" and key municipality focal points to promote future action based on community outputs. SHiFT engaged the municipality in the early phase of the project to get them on board with the community-identified priorities.

Trauma-Informed Logic & Content Warnings:The script should include safety guardrails, content notices, and trauma-informed questions. The survey should offer "exit ramps” that allow participants to exit or stop participating in the survey. By explicitly stating, "You don't need to mention painful or sensitive details," the tech respects the user's boundaries, ensuring the data collection process itself does not cause any intense triggers.

The Bot as Safety Net & Referral Mechanism:A survey in a crisis zone can act as a collection tool and a helpful resource as well. The closeout section should function as a safety net if possible, providing immediate hotlines for Child Protection, Gender Based Violence, and Mental Health Psychosocial Support. Additionally, we also recommend that in future chatbot powered surveys, bots should be programmed to "red flag" specific keywords like "paralysis," "sewage mixing," or "collapse" for immediate human review and create an escalation pathway to address these issues with the municipality.

VI. Conclusion

Overall, the project was a valuable test of how chatbot-enabled community sensemaking can rapidly surface priorities, broaden participation, and inform more focused in-person engagement. Using familiar interfaces like WhatsApp increased accessibility and adoption; however, reliance on large technology providers introduced administrative delays and approval bottlenecks that must be factored into future timelines. Future engagements should position the chatbot at the top of the community consultation process to fully capture and prioritize needs before deeper dialogue begins. With stronger on-the-ground outreach, this approach can deepen participation and ensure that digital engagement not only gathers insight efficiently, but translates community voice into credible, responsive action.